Sponsor: Do you build complex software systems? See how NServiceBus makes it easier to design, build, and manage software systems that use message queues to achieve loose coupling. Get started for free.

Keep one small part of your system from taking down the entire system. Let’s look at the bulkhead pattern, the various ways you think about it, and how it applies to your architecture, which will help you with fault tolerance to keep your system running.

YouTube

Check out my YouTube channel, where I post all kinds of content accompanying my posts, including this video showing everything in this post.

Problem

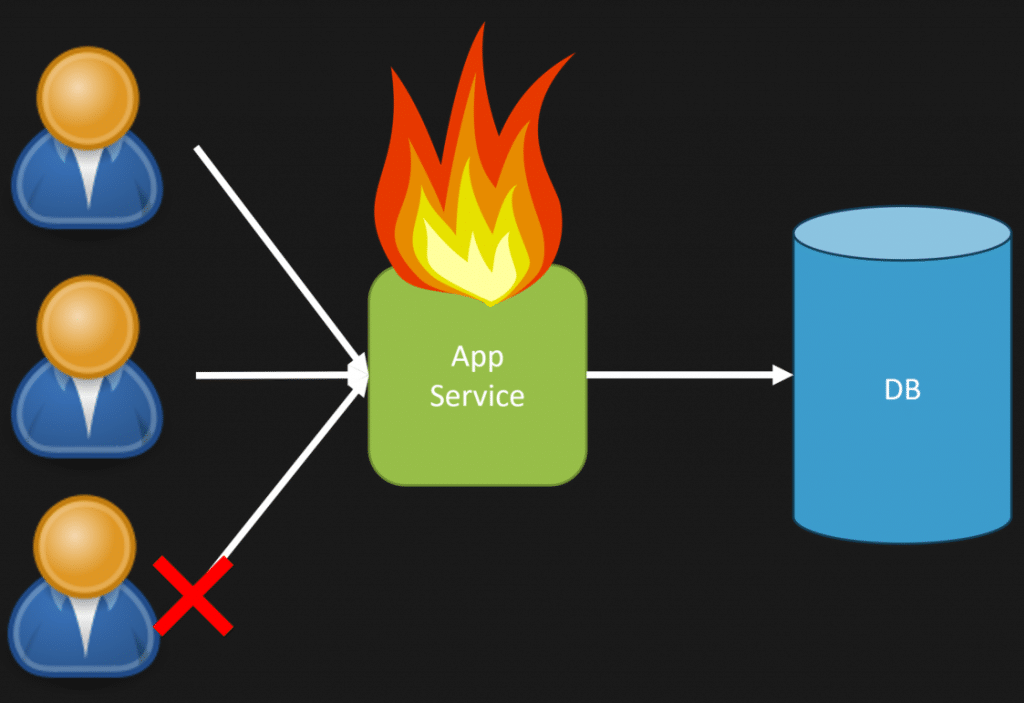

With a large system, it’s easy for one part to affect the performance of other parts. This is true for monoliths but also for more distributed service-oriented systems.

Most systems have heavily used parts, and others have limited use. In most situations, I like to think of the Pareto principle (80/20 rule), where 80% of your system usage likely comes from 20% of the functionality. If you were thinking of an HTTP API, that could mean that 20% of the routes exposed get 80% of the total traffic.

This means you could have the fringe part of your system cause an outage to the whole system. If there was some bug causing performance issues, that could exist in a fringe part of your system and affect the entire instance.

Isolation

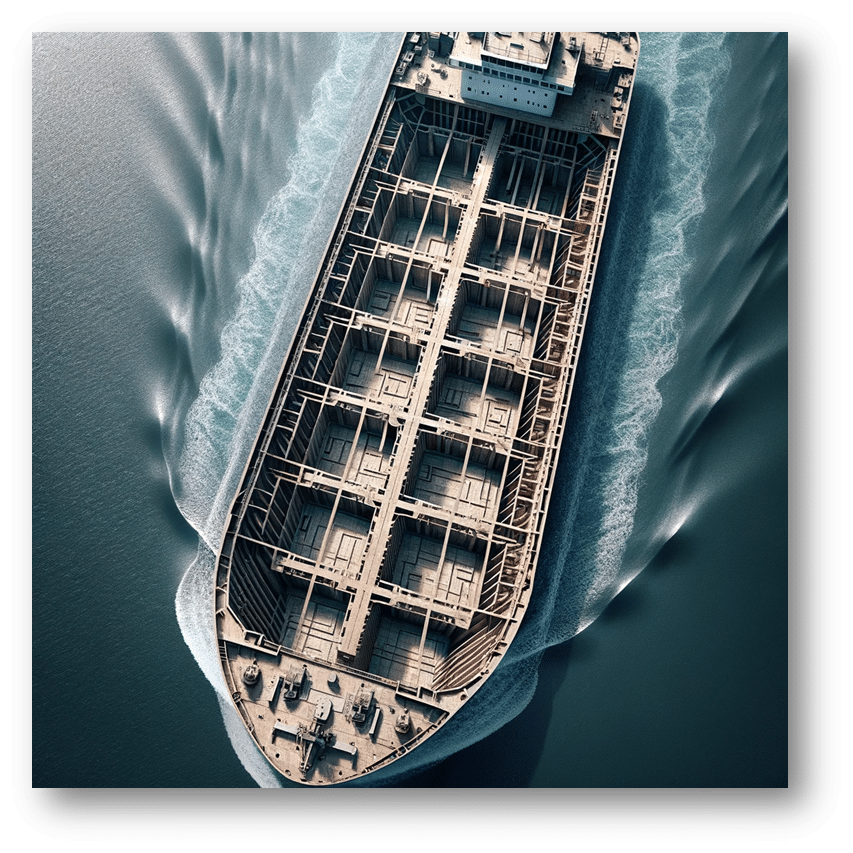

We want to create isolation in our system, just like the hull of a ship. If we take on water from one bulkhead, the entire ship doesn’t sink. One of the bulkheads takes on water. It’s isolated to that bulkhead.

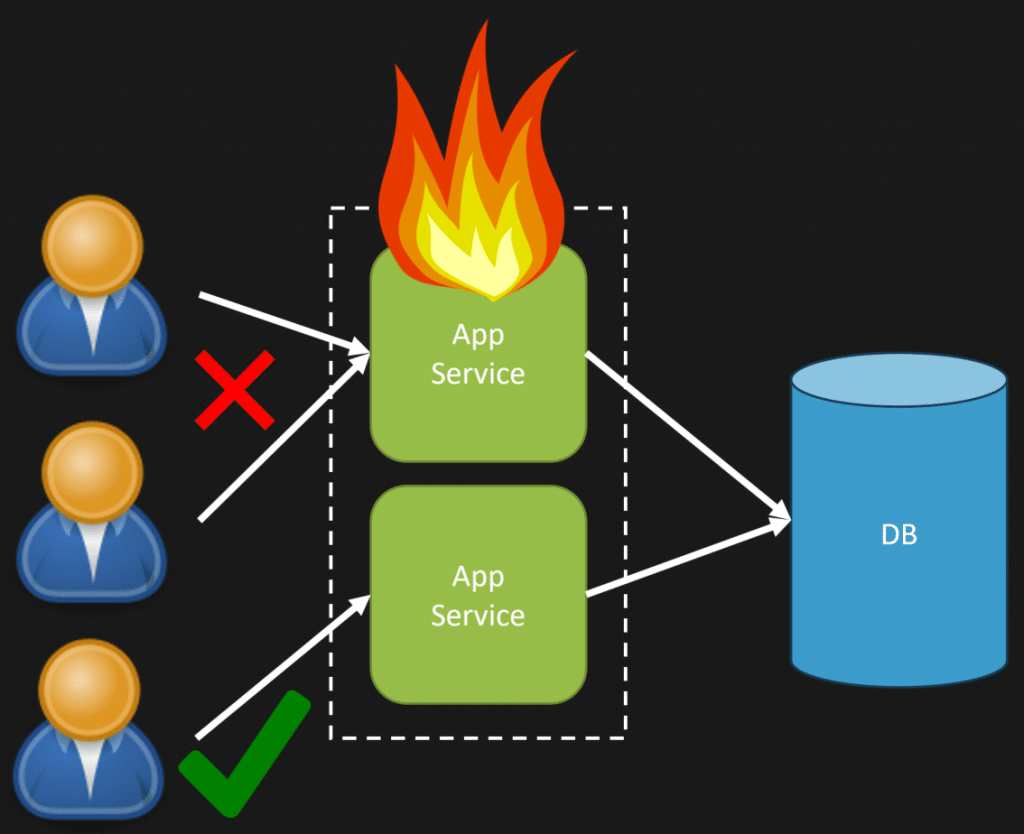

A way around this is to realize the hot paths—which parts of the system are used more than others or are more critical than others and can be isolated.

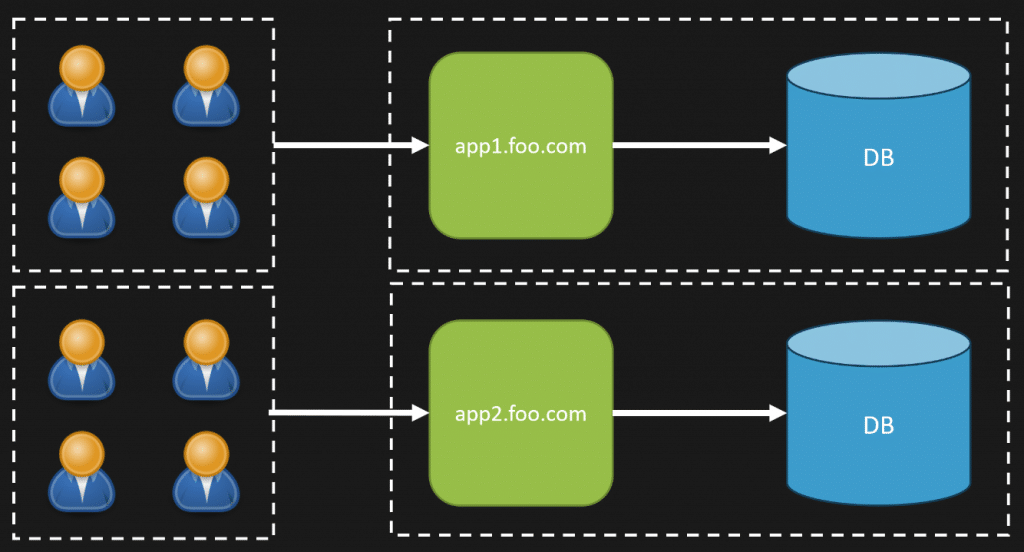

Creating isolation for those critical parts of your system so they are segregated. This is applicable in any type of system including a monolith. You can have the exact same underlying codebase running in a separate instance, scaled horizontally, however route specific traffic to different instances depending on how you want to isolate.

I want to stress you can do this with a monolith. Just because you want to isolate or scale specific parts of your system does not mean they must be separate physical deployable units. It can be the same same instance as if you were scaling horizontally; however, it is just creating different routing rules behind your load balancer.

Mix and Match

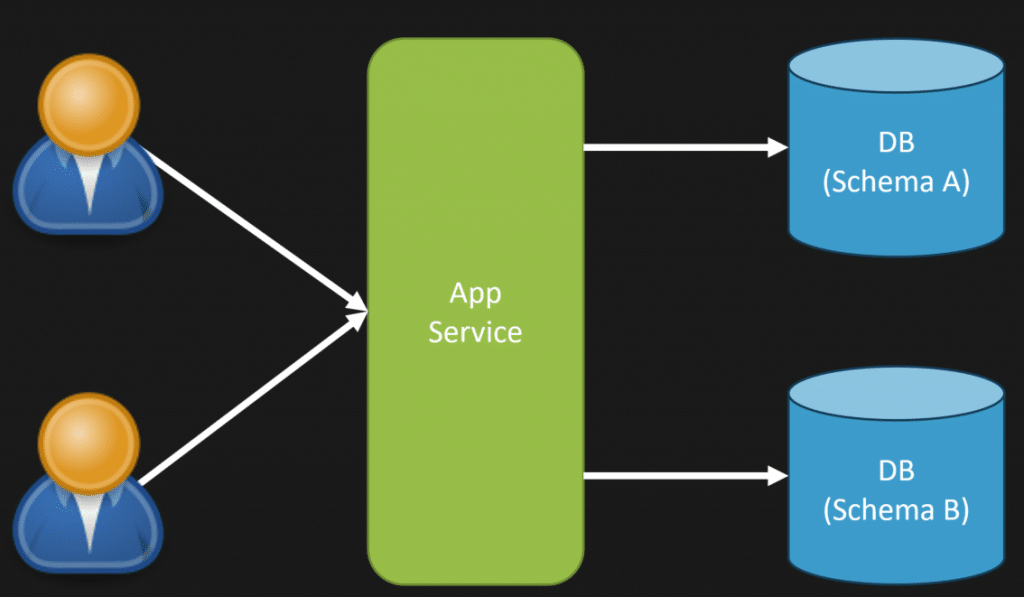

There are other ways to create isolation using the bulkhead pattern. I described doing so at the compute level, but other components exist, such as databases.

If there are intensive database operations in parts of your system, you may isolate those specific schemas into their physical database instance.

Another typical example is using read replicas to off-load queries for other purposes, such as reporting. Using a read replica for reporting allows all the load for reporting to be delegated to another instance that will not affect your primary database for writes.

You might already use the database-per-tenant strategy if you’re in a multi-tenant system. This is creating isolation!

You could be sharing compute instances between tenants but still have a database per tenant, or like the illustration above you might isolate both compute and database.

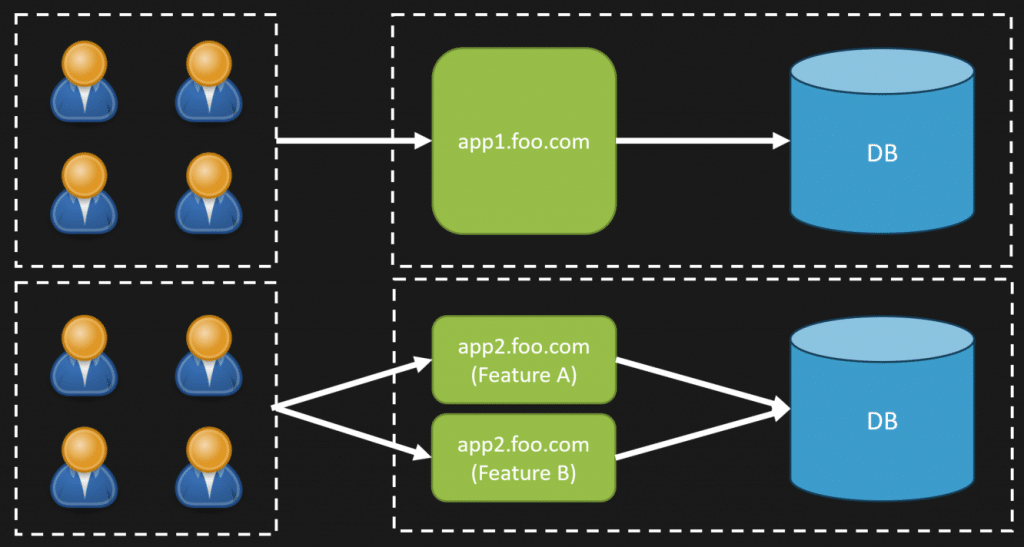

And you can mix and match everything I’ve just described so far.

In this case, you might have different instances for different feature sets and isolate by tenant or schema at your database level. It’s all about isolation.

Queues

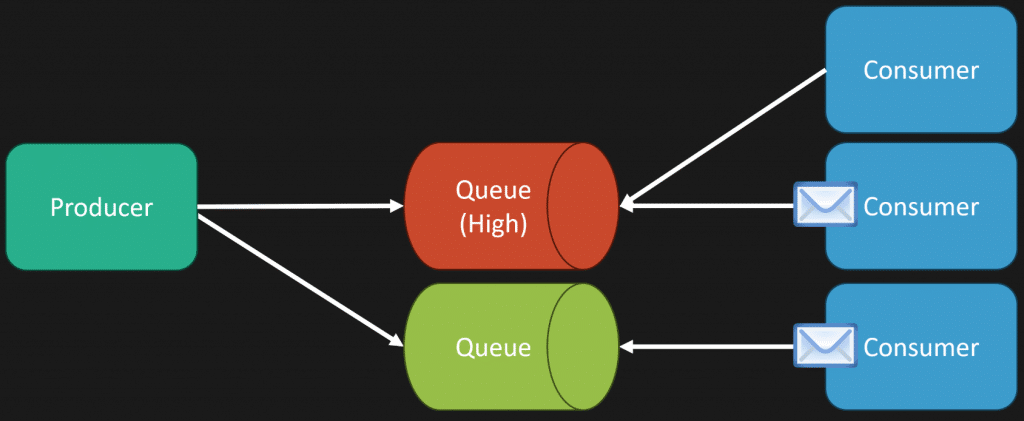

Another good example of the bulkhead pattern are queues. Similar to databases, you might want to start having different queues with different priorities. Some messages might need to have a lower processing time than others.

Creating different queues by SLA means you can monitor queue depth, processing time, etc, based on the priority of the messages. Using the competing consumers’ pattern to process more messages concurrently for different queues based on their priority/SLA.

Check out my post Competing Consumers Pattern for Scalability

Throttling

If you’re in a service-oriented environment and making synchronous network calls between services (I don’t recommend this, beware) or interacting with a 3rd party system with synchronous network calls, you may want to create isolation by thorrling your own outbound requests.

Typically, we think of the client getting throttled by the server, but you might want to throttle the client.

If you’re making an HTTP call to a third-party service and its regular latency is 100ms, and suddenly, it starts taking 3-5 seconds to return, that can have a significant impact on your system.

The added latency could be caused by you making too many calls to the other system, ultimately impacting other requests.

Because of this, you might want to throttle your own outbound requests. Here’s a simple example in C# using Polly to illustrate.

We have a limit of 10 concurrent requests with a max queue of 100. This means we can execute 10 concurrent outbound HTTP requests, and if any more need to occur, the yare queued up to a max of 100.

This allows us to limit the number of requests we might send concurrently to any 3rd party services (including our own).

Bulkhead Pattern

The bulkhead pattern is about creating isolation. It can come in many forms. You can isolate by capabilities of your system, data, and infrastructure such as databases, queues, etc.

As always, there are trade-offs. The biggest is complexity. Adding isolation likely means adding complexity either at a code or deployment level. Is the complexity you’re adding worth it to create the isolation?

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.